This is a core and crucial question. We can begin with an easy-to-understand analogy and then delve deeper.

Core Analogy: AI is a Factory

AI (Artificial Intelligence): This is like a modern car factory. Its goal is to take raw materials (data), process them through a series of complex procedures (algorithmic models), and ultimately produce intelligent products (AI applications like chatbots, image recognition, and self-driving cars).

computing power: This is the total electrical power for the factory. Without electricity, all machines stop. The more abundant and stable the power, the higher the factory’s production efficiency, and the more cars it can produce simultaneously and quickly.

chips: These are the specific machinery and equipment on the factory floor, such as stamping presses, welding robots, and assembly lines. Electricity (computing power) is ultimately channeled through these machines (chips) to be transformed into actual production capacity.

So, to summarize the relationship simply:

Chips are the physical carriers of computing power. Computing power is the core driver of AI development. And AI is the ultimate application and goal served by chips and computing power. These three form a trinity, collectively constituting the cornerstone of the current intelligent era.

Let’s break down each part in detail:

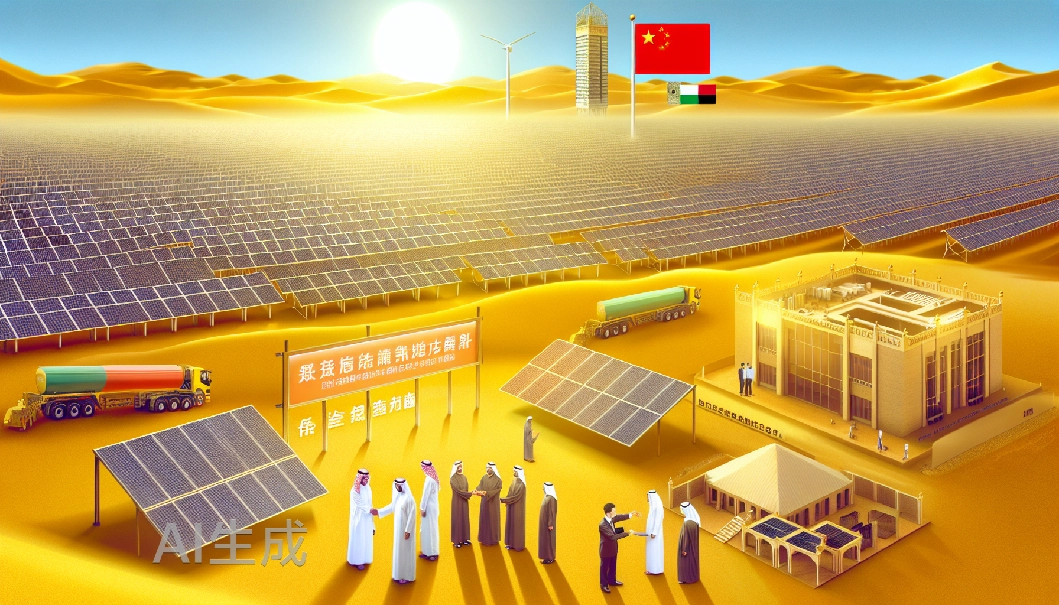

- Chips: The “Engine” and “Hard Foundation” of Computing Power

Chips are the physical hardware that executes computational tasks; they are the source of all computing power. Different types of chips are optimized for different AI tasks.

CPU: Like a “Versatile Professor”. Capable of handling many different tasks, but not the fastest at any single one. Suitable for general-purpose tasks and logic control, but inefficient for the massive parallel computations that AI craves.

GPU: Like a “Formation of Ten Thousand Elementary Students”. Each student is not computationally strong individually, but they can all perform the same simple calculations simultaneously (like drawing the same picture). This massively parallel processing architecture is perfectly suited for both the training and inference of AI models, as both involve performing the same matrix operations on vast amounts of data. (The current mainstay of AI computing power).

Specialized AI Chips: Like “Super Machines Custom-built for Specific Jobs”. For example:

TPU: Designed specifically by Google to accelerate neural network computations for its TensorFlow framework, often offering better performance-per-watt than GPUs.

NPU: A specialized processor embedded in devices like phones and cameras, dedicated to accelerating on-device AI applications (e.g., face recognition, photo optimization), with very low power consumption.

FPGA: Like “Lego Blocks”. The hardware logic can be reprogrammed as needed, offering great flexibility, suitable for the exploratory stages where algorithms are still rapidly evolving.

Without advanced chips, powerful computing power cannot be Provided.

- Computing Power: The “Fuel” and “Metric” for AI

Computing power generally refers to the ability of a computing device to perform calculations, quantifiable as “operations per second.” It is the quantitative measure of a chip’s performance.

Why does AI require enormous computing power?

Training Phase: A large model like GPT-4 needs to run continuously for months on thousands or even tens of thousands of GPUs, processing trillions of tokens (text data), just to “learn” language patterns. The computing power consumed in this process is astronomical.

Inference Phase: When the model is deployed (e.g., every time you ask ChatGPT a question), each response also consumes computing power. With hundreds of millions of users worldwide interacting simultaneously, the cumulative demand for computing power is equally massive.

The Manifestation of Computing Power: When we hear about “total computing scale” (e.g., a supercomputing center has XXX PetaFLOPS of computing power), it refers to the total number of floating-point operations all its chips can perform per second. Greater computing power allows for larger AI models, faster training times, and quicker responses.

Without sufficient computing power, the most advanced AI algorithms remain theoretical concepts on paper.

- AI: The “Value Realization” and “Driving Force” for Chips and Computing Power

AI is the final application layer; it drives the demand for chips and computing power.

The Evolution of AI Algorithms: From early simple decision trees to today’s models with tens or hundreds of billions of parameters, AI models have become increasingly complex and vast. This increase in complexity exponentially amplifies the demand for computing power and specialized chips.

Demand Drives Supply: The intense competition in the AI field from companies like OpenAI, Google, and Meta, constantly pushing for larger and more powerful models, has tremendously stimulated market demand for high-end GPUs (like NVIDIA’s H100/A100) and specialized AI chips, thereby driving innovation and development in the entire chip industry.

Hardware-Software Co-design: Modern AI frameworks (like PyTorch, TensorFlow) are deeply optimized with mainstream hardware (especially GPUs), allowing algorithms to utilize the hardware’s computing power to the utmost extent. This is “software defining hardware, and hardware empowering software.”

Summary and the Virtuous Cycle

These three elements form a tight, mutually reinforcing Flywheel Effect:

AI Sets the Demand: More powerful and intelligent AI models require stronger computing power.

Computing Power Relies on Chips: Stronger computing power must be provided by more advanced and specialized chips.

Chip Technology Breaks Through: Advancements in chip technology (e.g., process node shrinkage from 7nm to 3nm, chip architecture innovations) deliver more powerful computing power.

Computing Power Empowers AI: More powerful computing power makes it possible to train even larger AI models, giving rise to more powerful AI applications.

(Back to Step 1) More powerful AI creates new demand for computing power…

Therefore, in the current era, competition in the chip industry is, to a certain extent, competition in computing power, which directly dictates leadership and influence in the field of AI. Falling behind in any part of this cycle can lead to a passive position in the entire intelligent era.

Expanded Content: Adding More Dimensions and Future Perspectives

Let’s enrich this network with more dimensions, details, and forward-looking views.

I. Chips: The Evolution from “General Tools” to “Specialized Weapons”

Chips are the physical foundation of computing power, but they themselves are undergoing a profound paradigm shift driven by AI.

Why aren’t CPUs enough? – The “Professor” vs. “Factory” Metaphor

CPUs follow the Von Neumann architecture, emphasizing low latency and complex logic control. It’s like a versatile university professor, efficient at handling various complex tasks but limited in the number of tasks it can handle at once.

The core of AI computing is massively parallel processing and high throughput. It doesn’t require individual calculations to be extremely complex, but demands the ability to perform the same operations (like matrix multiplications and additions) on massive amounts of data simultaneously. This is the strength of the GPU.

Beyond GPUs: The “Arms Race” for AI Chips

TPU: Google’s Tensor Processing Unit, a type of ASIC. It’s彻底 optimized for “tensor” calculations in neural networks, stripping out redundant components in GPUs designed for graphics, achieving superior performance-per-watt and absolute speed for specific AI tasks.

NPU: Neural Processing Units embedded in end-devices like phones and smart cameras. They specialize in on-device AI inference, pursuing extreme low power consumption and real-time performance, enabling “AI on the Edge.”

FPGA: Field-Programmable Gate Arrays, like “remoldable clay.” In the early stages of rapid AI algorithm iteration, their hardware reconfigurability offers great flexibility. While absolute performance and efficiency might not match ASICs, they remain invaluable for prototyping and in specific vertical domains.

Summary: The development path of chips is moving from a “one-size-fits-all” general-purpose computing model towards Domain-Specific Architectures (DSA). This is a direct result of AI application demands forcing hardware innovation.

II. Computing Power: More Than “Fuel” – It’s the “Metric” and “Admission Ticket”

Computing power has evolved from a technical concept into a quantifiable, tradable strategic resource.

“Moore’s Law” vs. the “Scaling Laws” for Computing Power

The traditional Moore’s Law (the number of transistors on a chip doubling roughly every 18-24 months) is slowing down.

A new Scaling Law has emerged in AI: model performance scales as a power law with model size, data size, and the amount of computing used. This means that to achieve more intelligent models, one typically needs to increase computing investment exponentially.

The Embodiment of Computing Power: From FLOPS to “AI Factories”

We use the quantitative metric FLOPS to measure computing power. Training GPT-3 required thousands of NVIDIA A100 GPUs, delivering over 3640 PetaFLOPS-days of computing power – equivalent to a top-tier home computer running for thousands of years.

Modern hyperscale Data Centers are evolving into “AI Factories”. Here, power, networking, and cooling systems all serve computing power. Computing power is becoming an infrastructure utility, available on-demand through cloud services.

The Cost of Computing Power: The Barrier to AI Innovation

The cost of training a single top-tier large model can run into tens or even hundreds of millions of US dollars. This places powerful AI R&D out of easy reach for startUPS or academic institutions, making computing power a prohibitively expensive admission ticket for AI innovation.

III. AI: From “Algorithmic Model” to “Productivity Engine”

AI is not just the end point of technology; it is also the starting point that drives the entire cycle.

AI’s “Insatiable” Appetite for Computing Power

Training: It’s like making an AI read all the books on the internet and understand their patterns, which requires “burning” through vast amounts of computing power.

Inference: When models are deployed, the simultaneous access by hundreds of millions of global users constitutes a continuous, massive drain on computing power.

AI Empowers Chip Design in Return

This is a fascinating feedback loop. Companies are now using AI to help design more complex chips.

Google uses its AI technology to complete chip floor planning in a matter of days, a task that would take human engineers months. This dramatically accelerates the development of next-generation chips, creating an accelerating feedback loop: “AI designs chips, chips empower stronger AI.”

Conclusion: The Dynamic Flywheel and Future Challenges

The flywheel formed by chips, AI, and computing power is spinning at high speed:

The Ambition of AI Models (Larger, Smarter) → Creates Insatiable Demand for Computing Power → Drives Disruptive Innovation in Chip Technology (GPU, TPU, …) → Gives Rise to More Powerful Computing Clusters (AI Factories) → Enables the Training of Even More Powerful AI Models → …

Future Outlook and Challenges:

The Power Wall: The growth of computing power comes with terrifying energy consumption. Future competition will not only be about computing power but also about “compute per watt.” This places extreme demands on chip processes and cooling technologies.

The Ecosystem Barrier: NVIDIA has built a powerful moat with its CUDA software ecosystem. Future competition is not just about hardware but also about software platforms and developer ecosystems.

Autonomy and Control: For nations worldwide, the ability to design and manufacture advanced AI chips and build autonomous computing power infrastructure has become a core strategic issue related to national technological sovereignty and industrial security.

Therefore, understanding the relationship between chips, AI, and computing power is not merely about understanding a technology; it is key to understanding the underlying logic of technological progress in our time and the global competitive landscape for the next decade.